Operationalizing E23: Scaling AI Governance Without Slowing Down AI

Context: E23 compliance expectations and the AI governance bottleneck

E23 is driving organizations to govern AI in a more specific way, particularly within financial services. A central challenge is incorporating AI governance under the umbrella of model risk in a way that remains workable as AI adoption accelerates. Many organizations have identified hundreds or thousands of AI use cases, yet a significant portion remains “stuck” and waiting to move into production.

A common response to new compliance expectations is to add reviews, checkpoints, and manual steps to existing model risk practices. This approach creates a governance-versus-speed dynamic that does not scale in an era where AI changes quickly, updates frequently, and requires more continuous oversight than periodic review cycles.

Get your copy of OSFI’s Guideline E-23 and Model Risk Management in Canada.

The false trade-off: more governance does not have to mean less speed

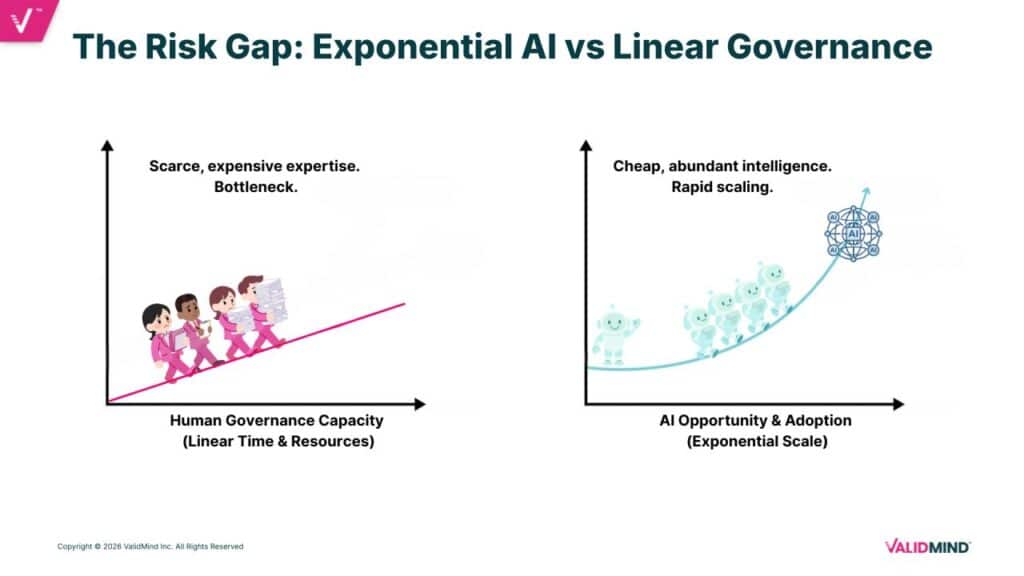

Historically, governing more models meant hiring more experts and spending more time documenting, testing, validating, and monitoring. That linear capacity model breaks down when applied to AI. AI development and deployment require velocity, and the traditional approach of adding manual tollgates slows organizations in ways that do not work for modern AI programs.

The scaling problem becomes more acute as organizations anticipate doubling, tripling, or increasing by an order of magnitude the number of models and AI systems in use over a short period. Applying the same manual checkpoints and processes to a rapidly expanding inventory does not scale.

Why traditional model risk practices struggle with AI

Model risk practices have often relied on manual reviews and manual documentation, with controls defined after a model is built. AI introduces broader and less isolated risk considerations, including increased data usage and risks spanning cybersecurity, fraud, and other organizational domains. AI governance also creates unclear ownership across groups, which complicates accountability and execution.

Manual processes also contribute to outdated model risk inventories, since they require periodic manual editing to reflect what is actually happening. If the lifecycle for getting AI into production remains heavily manual, it fails to scale and cannot keep pace with competitive pressures.

A scalable approach: embed governance and automate the AI lifecycle

A scalable AI governance approach requires governance to be embedded throughout the organization rather than added as manual steps. Controls need to be built into the AI itself and into the surrounding ecosystem so that AI operates in an environment where its capacity is controlled and updated in a way that matches organizational expectations.

This approach includes automation across documentation, testing, validation, and monitoring. It also requires alignment across model risk, AI governance, engineering, data governance, cybersecurity, and fraud teams, with monitoring performed at a frequency appropriate to the risk level.

Risk-based governance under E23: tiering models and focusing expert time

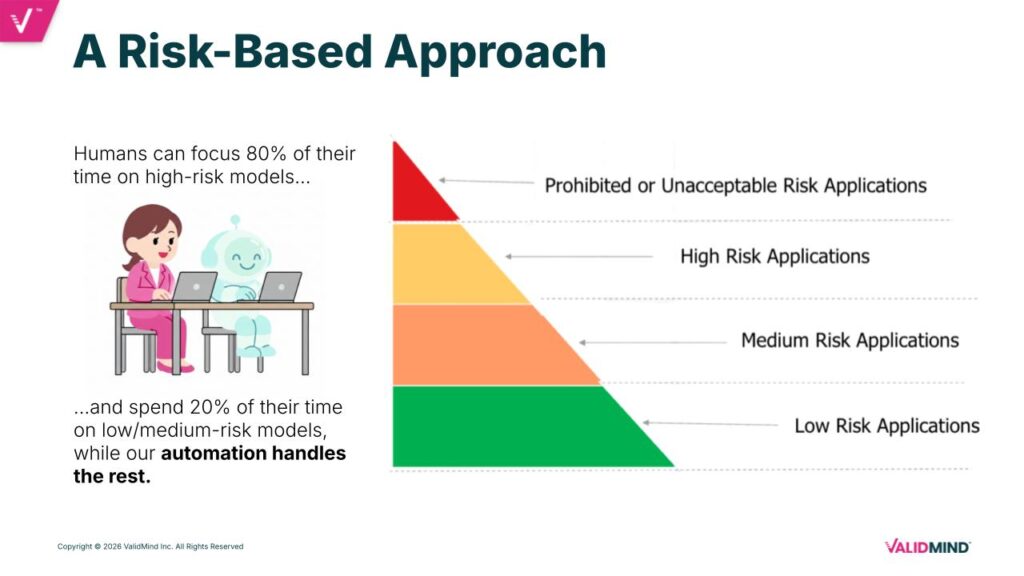

A core principle of E23 is a risk-based approach. This requires a consistent method to categorize and tier AI from high risk to low risk. Typically, there are relatively few high-risk models that are critical and risky to the business, and many lower- to medium-risk applications.

Robust AI governance depends on ensuring experts spend the majority of their time on high-risk models, while lower- and medium-risk applications move through a more automated lifecycle with appropriate human oversight. Lower-risk applications can still deliver significant organizational benefit, which increases the importance of streamlining their path to production while maintaining E23-aligned controls.

This tiering enables two pathways: an expert-led, human-in-the-loop approach for high-risk AI with advanced validation, and a highly automated approach for lower- to medium-risk AI that streamlines documentation, testing, regulatory checks, and continuous monitoring.

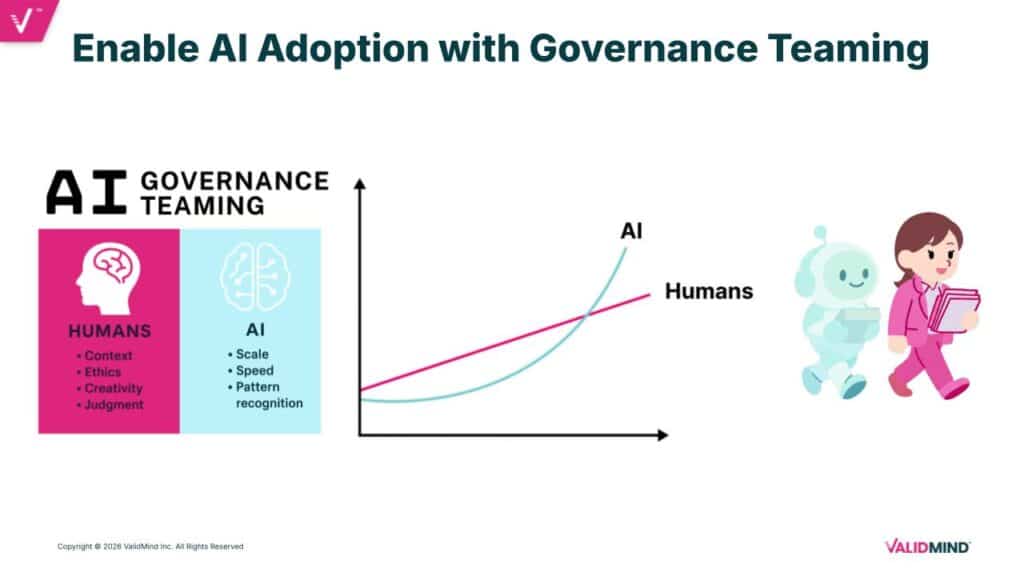

AI governance teaming: using AI and humans together to govern AI

Keeping pace with AI’s rate of change requires including AI in the AI governance process while maintaining the human element. This “AI governance teaming” model pairs human expertise with purpose-built AI to govern AI in tandem. The objective is to maintain governance quality while achieving the speed and scale required for frequent model updates, retesting, and expanded deployment across use cases.

Operationalizing controls: built-in safeguards, monitoring, and kill switches

Controls built into AI agents and AI systems are intended to prevent situations where an agent “goes rogue” or performs transactions it should not. These controls identify when performance or behavior is going wrong and can shift controls, reduce capability, or turn an agent off until a review is completed.

Monitoring needs to be tied to risk level and connected to controls that can immediately disable systems or limit functionality to reduce potential harm. This operational model also requires a centralized system of record where AI status and control actions are visible and enforceable, rather than requiring teams to manage shutdowns and restrictions across multiple disconnected environments.

Dashboarding is a critical component. Executives require fast access to current realities, and incident response cannot depend on slow deep dives followed by delayed reporting. Information needs to be available quickly when something happens.

Automating documentation, testing, validation, and monitoring with templates and checks

Efficiency gains begin with a library of documentation templates tailored to model type, risk tier, and business context. Templates define what must be documented for a given AI system, enabling consistent evidence capture.

Automation then supports the lifecycle:

- Automated tests are run as much as possible.

- Results are interpreted and documented with developer insights.

- Human and AI collaboration produces a fully completed document containing the latest tests, results, and documented insights.

- A “checker” interrogates the completed documentation against E23 expectations, best practices, or a combination of both before it reaches validation teams.

Validation follows a similar pattern, including automated testing, comparison of developer evidence with independent tests, full documentation of outcomes, and rechecking against E23 expectations. Monitoring is then set up with the same emphasis on automation and frequency aligned to risk.

For low-risk models, the process is designed to be almost fully automated: selecting or suggesting the right template, identifying required tests, documenting results, routing for human review and approval through workflow, and evaluating the final documentation against relevant regulations. For high-risk models, the same capabilities apply, with more detailed human review, editing, and oversight.

Integration as a practical requirement for scalable AI governance

Integration within existing modeling ecosystems is a key requirement for operationalizing AI governance without excessive friction. Tests should run in the same environment where work is performed, with results sent to populate documentation in a light-touch way. This reduces unnecessary data movement while maintaining an auditable record with results pulled directly from source environments.

Key implications: governance must connect to live AI systems

AI governance cannot remain a manual approximation of what is happening. Governance platforms and controls need to communicate directly with AI agents and business systems, linking limitations and authority to real-time conditions. When defined conditions are met, controls can change an agent’s abilities or turn it off, with alerts and status managed through a single source of truth.

This model treats AI agents as digital workers with a management layer that assigns authority, monitors behavior, and can revoke authority instantly.

Next steps for implementing E23-aligned AI governance at scale

A practical set of next steps includes building an AI inventory and establishing a consistent, logical, defensible method for tiering AI. E23 requirements then need to be mapped to each stage of the model and AI lifecycle, with automation applied wherever possible across documentation, developer-to-validator handoffs, and monitoring.

Time-intensive steps should be identified and targeted for automation. AI can be used to simplify and remove complexity, with pilots and iteration used to build organizational knowledge and feedback loops that support scaling in a way appropriate to risk and compliance expectations.

Get your copy of OSFI’s Guideline E-23 and Model Risk Management in Canada.